Blog

Featured

General Finance

Nov 27, 2024

•

5 min read

Data Reasoning: The Solution For Automating Complex Data Workflows in Financial Services

Browse by category

Latest articles

Asset Management

May 27, 2025

Unlocking Efficiency: Empowering Investment Bankers With AI

General Finance

May 8, 2025

Finance Firms Favor AI’s Benefits Over Risks

Asset Management

April 29, 2025

AI for Investor Relations: Leveling the Playing Field

General Finance

April 23, 2025

The Rising Cost of Compliance Fines & How Data Reasoning Can Help

Asset Management

March 31, 2025

Using Data Reasoning For Portfolio Research & Construction

General Finance

March 20, 2025

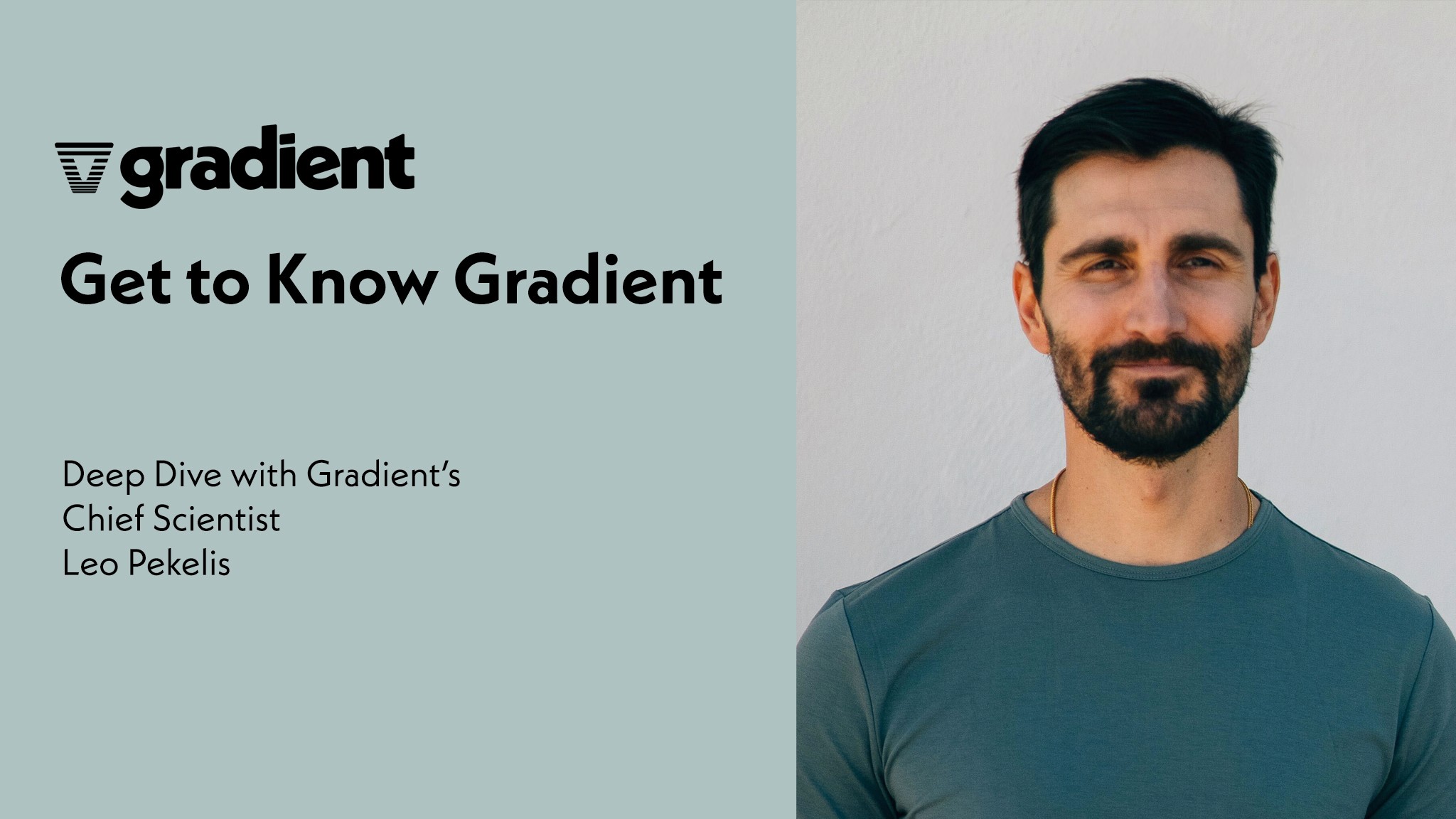

Get to Know Gradient: Leo Pekelis, Chief Scientist

Load More

Filter by tag

Data Reasoning

Unstructured Data

Thought Leadership

Financial Services

Automation

Filter by tag

Filter

Asset Management

May 27, 2025

Unlocking Efficiency: Empowering Investment Bankers With AI

General Finance

May 8, 2025

Finance Firms Favor AI’s Benefits Over Risks

Asset Management

April 29, 2025

AI for Investor Relations: Leveling the Playing Field

General Finance

April 23, 2025

The Rising Cost of Compliance Fines & How Data Reasoning Can Help

Asset Management

March 31, 2025

Using Data Reasoning For Portfolio Research & Construction

General Finance

March 20, 2025

Get to Know Gradient: Leo Pekelis, Chief Scientist

Load More

Filter by tag

Filter

Asset Management

May 27, 2025

Unlocking Efficiency: Empowering Investment Bankers With AI

General Finance

May 8, 2025

Finance Firms Favor AI’s Benefits Over Risks

Asset Management

April 29, 2025

AI for Investor Relations: Leveling the Playing Field

General Finance

April 23, 2025

The Rising Cost of Compliance Fines & How Data Reasoning Can Help

Asset Management

March 31, 2025

Using Data Reasoning For Portfolio Research & Construction

General Finance

March 20, 2025

Get to Know Gradient: Leo Pekelis, Chief Scientist

Load More

Filter by tag

Filter

Asset Management

May 27, 2025

Unlocking Efficiency: Empowering Investment Bankers With AI

General Finance

May 8, 2025

Finance Firms Favor AI’s Benefits Over Risks

Asset Management

April 29, 2025

AI for Investor Relations: Leveling the Playing Field

General Finance

April 23, 2025

The Rising Cost of Compliance Fines & How Data Reasoning Can Help

Asset Management

March 31, 2025

Using Data Reasoning For Portfolio Research & Construction

General Finance

March 20, 2025

Get to Know Gradient: Leo Pekelis, Chief Scientist

Load More

© 2025 Gradient. All rights reserved.

© 2025 Gradient. All rights reserved.